Here on the GOV.UK Verify team we’re always looking for ways to improve the service so that more users are able to securely access services online. This post describes a recent change we made to the hub, how we made an unexpected 10% improvement and, most important of all, what we learnt.

Based on the answers to a few basic questions, the GOV.UK Verify hub (which allows communication between the user, the certified company, and the service on GOV.UK) helps users choose a certified company that is most likely to be able to verify their identity.

Asking questions in the best way, so users don’t waste their time

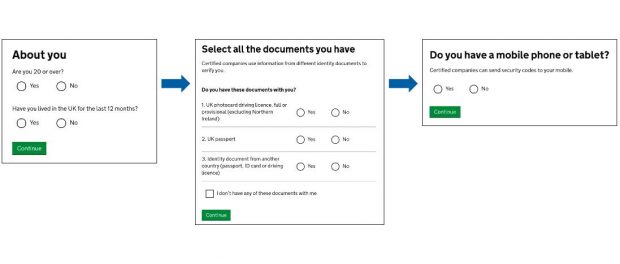

The hub asks 3 sets of questions. Until recently, the questions appeared to users in this order:

- What documents do you have?

- Do you have a mobile phone or tablet, and can you install apps?

- Are you resident in the UK, and are you aged 20 or over?

We set the order of these questions in July 2015 because, at that point, users needed either a UK passport or a UK driving licence to verify their identity. Some users don’t have either of these documents and, of the three sets of questions, ‘Select all the documents you have’ was the most likely to cause users to drop out and seek alternative means to access the government service they wanted. So we decided to ask this question first, so users without a UK passport or UK driving licence didn’t waste their time answering additional questions unnecessarily.

Recent improvements by certified companies

That’s no longer the case. Thanks to recent improvements made by the certified companies - some of which can now verify users who don’t have a UK passport or UK driving licence - all users can progress past this first question.

Other improvements introduced by certified companies have expanded the methods for 2-factor authentication. This means that users without a mobile phone or a landline are now able to complete verification - for example, by downloading a code to their computer. This means that everyone can now progress past the second question about their devices, whether they have a mobile phone or not.

Changing the order of the questions - again

Now everyone can progress past the first two sets of questions, including those without a UK driving licence and passport, and those without a telephone.

However, users who aren’t aged 20 or over or resident in the UK are not able to proceed and choose a certified company to verify them. Certified companies are less likely to have the data coverage to be able to verify these users. This will change as demographic coverage improves, but for now users who cannot proceed with GOV.UK Verify are directed to alternate routes to use the service.

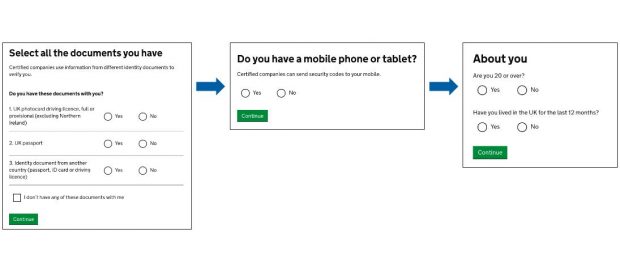

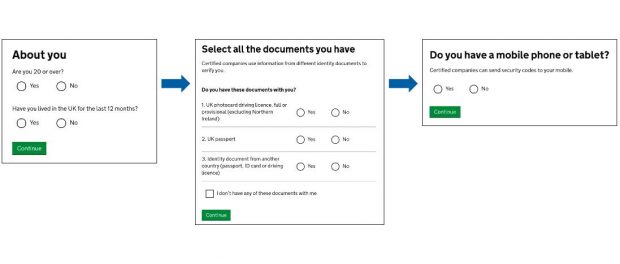

Moving the age and residency question to the beginning of the user journey seemed like an obvious thing to do to save those users a bit of time. The user journey now looks like this:

This change helps to let users who won’t be able to verify know that they can’t proceed a lot sooner in their journey. We initially thought that asking people the same questions in a different order probably wouldn’t affect the performance of the service. However, analysing the performance data following this change revealed something unexpected.

An unexpected 10% improvement

A week after the change was made, we noticed that performance had improved; the rate at which new users were successfully completing the verification process had improved by 10%. This increase has been maintained in the last 6 weeks. More users were answering all 3 sets of questions, and there was a similar increase in users going on to choose a certified company and return with a verified account.

The effect varied across the 10 services connected to GOV.UK Verify; those services with lower completion rates saw the largest increase in users answering all the questions, whilst those services with high completion rates saw little or no change.

We won’t know for certain what caused this effect without some qualitative research to understand why this happened. One possibility is that users who have already answered a couple of easy questions (‘are you over 20’ and ‘do you live in the UK?’) are then more prepared to go on to answer more difficult questions (‘do you have these documents with you?’).

What we do know is that there are some valuable lessons that we’ve learnt from this change, which we can apply to further iterate and improve GOV.UK Verify.

What we learnt

We should experiment more to find improvements

Small, seemingly innocuous, changes can turn out bigger than expected and some of the changes we make will have effects that we can’t predict. What was surprising about this change was that something as simple as changing the order a user is asked to do something can result in a big improvement in performance. Not all changes will have this effect, and it’s only through experimenting that we’ll be able to find those which make a positive impact.

Regular A/B testing is a safety net and should be used for any change

We were lucky that this change went beyond our expectations and made the service perform better. But regular A/B testing adds a level of rigour that makes it easier to spot subtle effects, and protect the team from inadvertently introducing bad changes. Without A/B testing it’s more difficult to see the true effect of whatever change has been made; a large effect is likely to be picked up in our before/after analysis, but smaller, subtler effects can easily be lost in the noise.

We’ve seen first hand that a small change, in this case the order in which we ask questions, can uncover an interesting effect. We’ve recently resumed regular A/B testing following the installation of a new testing framework, and our plan is to now test as many changes as possible.

You can keep up to date with our progress, how we’re improving GOV.UK Verify, and what we’re learning by subscribing to the blog.

2 comments

Comment by MarkK posted on

It might also be related to the bug that often leaves one or more of the IdPs off the results list.

If there are currently 7 it seems odd to be told there's 1 that is likely and 4 unlikely, or 3 likely and 3 unlikely.

Comment by Oliver posted on

Hi Mark - based on the answers provided in the hub a company might not be able to verify the user. So, if you see 3 companies who can verify you, and 3 companies who are unlikely to be able to verify you, the 7th company is unable to verify you based on the answers provided, and will not be shown.